What (some) people think about cultured meat

Results from a pilot study of UC San Diego undergraduates (final N = 184).

As I’ve written before, I’m interested in “cultured” (i.e., lab-grown) meat. I think it’s one (hopefully) viable path towards reducing our dependence on factory farming, along with its impacts on animal suffering, environmental degradation, and the spread of zoonotic diseases.

And as a social scientist, one of the best ways I can think to make an impact on this issue is to identify the psychological and cultural barriers to widespread adoption of cultured meat. Thus, over the past few months, I’ve been reading relevant research articles, writing reviews of these articles, and planning out (and conducting) research of my own. I’m not yet at the point of conducting a large-scale survey of the kind I think is necessary for generalizable insights. But I have conducted a smaller-scale “pilot” survey exploring some of the ideas I’m interested in; that’s what this post is about.

Background

Why I ran this pilot survey

In behavioral research, a “pilot” experiment is something you run before you’re ready to run the “real” version of your experiment. It’s useful for:

Testing the viability and feasibility of a study design. This might refer to the viability of a scientific hypothesis, but it can also literally mean things like technical feasibility—e.g.,, testing your survey for bugs, ensuring your platform for recruiting participants works, and so on.

Replicating past work. This is useful both for checking the robustness of previous findings and simply getting to know the details of their implementation better—there’s often a big gap between reading a result in a paper and actually building a survey and analysis pipeline from scratch to replicate that finding.

Generating novel hypotheses. Sometimes, you don’t know exactly what to look for. Exploratory data analysis is incredibly valuable for research progress, but you have to be careful it doesn’t turn into a p-hacking expedition (i.e., searching for any significant result you can find and publishing that as if it was your question all along). Pilot studies can be useful for conducting exploratory analyses, which in turn can be used to generate hypotheses about future work. This is kind of the behavioral science version of cross-validation in machine learning, which is used to prevent overfitting.

Caveats, or: what this pilot won’t tell us

Any research study has caveats; a pilot study has even more. Here are some of the main issues as I see them.

The most obvious issue with this pilot is the sample. Ideally, samples should random and representative of the population you’re interested in. Psychology and other social sciences have well-known issues with biased samples: most subjects come from “WEIRD” countries (Western, Educated, Industrialized, Rich, and Democratic), yet psychologists are often interested in drawing conclusions about humans in general. My sample has many of the usual issues: the subjects are undergraduates at a four-year university in the United States (UC San Diego) and are therefore not representative—in their political views, household income, level of education, and more—of the broader market for meat or cultured meat; further, because they’re also mostly Psychology or Cognitive Science majors, the sample skews heavily female (~84%).

Another caveat has to do with the measurements themselves. There’ll be more details on this in the section below, but there’s always a potential gap between the thing we’re trying to measure (e.g., disgust sensitivity) and the instrument we’re using to measure it (e.g., the 26-question “Disgust Scale”). The same goes for the outcome measures: we can ask people how willing they are to eat cultured meat, but it’s impossible to know whether this would translate reliably to actual behavior. People are in general not great at predicting their own behavior, and there are various social pressures (e.g., social desirability bias) that might lead them to respond a certain way in a survey.

Finally, at least some of the analyses below are exploratory, and accordingly, that raises the possibility that the findings are spurious. (And that would be true even if the other two issues I’ve raised were sorted out.) I’ll be clear about which are exploratory, but I wanted to flag that up front.

What I did

Getting the data

I recruited 200 undergraduate UC San Diego students using Sona, UCSD’s internal recruiting platform. The students filled out a survey that I designed on Qualtrics, a tool for quickly and easily making web surveys.

After filling out their consent forms, participants responded to some questions about basic demographic information: age, self-identified gender, political affiliation, dietary preferences, highest level of education achieved, household income bracket, personal income bracket, and occupation.1 I included these factors specifically because as I've discussed elsewhere, they've all been correlated with willingness to consume cultured meat in past work.

Participants then filled out the Disgust Scale, a set of 26 questions designed to probe disgust sensitivity. This specific instrument has been used in past work, e.g., this 2019 study on psychological predictors of attitudes towards cultured meat. Answers to these 26 questions can be combined to create a composite score, which I’ll refer to throughout as disgust sensitivity. Just for reference, here are some of the scenarios from the scale:

You are walking barefoot on concrete and you step on an earthworm.

And:

You see maggots on a piece of meat in an outdoor garbage pail.

And:

You take a sip of soda and realize that you drank from the glass that an acquaintance of yours had been drinking from.

In each case, you have to say how disgusting you find the scenario, from “not at all disgusting” to “extremely disgusting”.

Next up was the part about cultured meat. Participants were first asked about their familiarity with the concept, from “Not at all familiar (never heard of it before)” to “Very familiar (I regularly read news articles and keep updated with new developments)”. Then, they read a passage defining and describing cultured meat. This passage was cobbled together using passages from past work (e.g., this Faunalytics study I’ve written about before). Unlike past work, I manipulated whether cultured meat was described as “grown” or “manufactured”:

Cultured meat is real meat, grown / engineered from animal cells without the need to raise and slaughter farm animals. It has significant benefits for the environment, animals, and human health.

A small number of cells are extracted harmlessly from a living animal and more are constructed / grown using a growth medium. A similar (industrial) process is used in fermenting yogurt and beer.

In August 2013 researchers unveiled the world’s first cultured hamburger patty. Since then over 20 companies worldwide are developing cultured meat, though it is not yet commercially available because of the high costs involved in growing / manufacturing the meat.

Participants only read one of the two versions. I was interested in whether this manipulation (“grown” vs. “manufactured”) affected their stated willingness to try cultured meat.

After reading the passage, participants answered a free response question about their “general thoughts, feelings, and attitudes” about cultured meat. They also indicated how willing they were to try cultured meat, whether they would be willing to pay higher taxes to subsidize the production of cultured meat, and whether they were willing to pay a premium for cultured meat. Those who said “yes” to the latter question were prompted to select how much in percentage terms; those who said “no” were prompted to indicate how much of a discount would be required to purchase it.2 These questions included a description of how the premiums and discounts worked, e.g.,:

I.e., if conventional meat is $5 a pound, a 10% premium would be an additional 50 cents ($5.50 total), and a 20% premium would be an additional $1 ($6.00 total).

Finally, participants were debriefed on the purpose of the study.

How I analyzed the data

There were a lot of survey questions, which means there are a lot of ways one could look at the data. This section describes what I’ve done so far, but I’ve also made an anonymized version of the data (along with the analysis code) available on GitHub for anyone interested in exploring it further. Note that the final number of participants after excluding people who skipped through a bunch of questions was 184.

Demographic breakdown

As you’d expect with a sample of college undergraduates, the sample was skewed in a few different ways:

A very high proportion of participants identified as female (~84%); 14% identified as male and 1.6% identified as non-binary.

The sample also skewed liberal: about 70% answered that they were either “very liberal” or “somewhat liberal”.

Answers about household income included 20% who said their household income was over $150,000; assuming this is correct, it’s pretty out of sync with the US medium household income (~$70K).

This skew undeniably affects the quality of global, absolute estimates like “how many people in the United States are willing to try cultured meat?” Such an estimate would be unreliable from this skewed sample.

It’s less clear (though still possible) whether it affects relative differences, like “are men more willing to eat cultured meat than women?”

Disgust sensitivity was roughly normally distributed: most people clustered in the middle, with fewer in the “tails” of the distribution (i.e., either very high or very low sensitivity).

Describing the outcome measures

As I noted above, estimates of our outcome measures are bound to be skewed. But because it will still be helpful to know these later on, here they are:

About 72% of people said they were probably or definitely willing to try cultured meat.

About 18% of people said they’d be willing to pay higher taxes to help subsidize production of cultured meat.3

Only 7% of people said they’d be willing to pay a premium for cultured meat (another 42% said “maybe”).

Replicating past work

One of my first goals was replicating past work. In particular, past work has identified a few systematic predictors of willingness to try cultured meat:

Gender: men generally indicate higher willingness than women.

Disgust sensitivity: people with higher disgust sensitivity usually say they are less willing to try cultured meat.

Politics: liberals indicate more willingness to try cultured meat.

To replicate these findings, I constructed a multivariate (multiple predictors) linear regression model predicting willingness to try.4 This model included a bunch of the demographic predictors I described above, as well as the composite measure of disgust sensitivity.

Overall, this model had an R^2 of .135, meaning all these variables together explained roughly 13.5% of variance in participants’ willingness to try cultured meat. (Whether or not that’s “good" is a judgment call—but keep in mind that correlations are generally fairly low in social science, simply because behavior is quite complex.)

Looking at a few variables in particular, we see that Disgust Sensitivity is significantly, negatively correlated with willingness to try (even after controlling for these other demographic variables). According to the model, for each 1-unit increase in a person’s disgust sensitivity, their predicted willingness to try cultured meat decreases by ~.02. This isn’t a huge change, but it means that going from a ~30 (on the lower end of disgust sensitivity) to an ~80 (on the higher end) would correlate, on average, with a 1-point drop in one’s willingness to try.

The scatterplot below shows disgust on the x-axis and willingness to try on the y-axis (rescaled from -2 to 2). It’s an odd-looking plot because willingness to try is technically a discrete variable; you can’t have a willingness of 1.5.5

There was also a main effect of gender: as in past work, male participants reported a higher willingness to try cultured meat. According to the model, once you account for other factors, this corresponded to about a ~0.48 difference in willingness to try, and was just barely significant (p = .048), which I chalk up mostly to this being a relatively small sample.

Unlike past work, we didn’t replicate the correlation with political affiliation. This could be because the relationship is weak to begin with, or because the sample is so skewed that we didn’t get enough data from conservative participants to reliably estimate the regression line.

Novel findings

I also found out some new things, most of which are exploratory.

First, I used a simple sentiment analysis tool in Python’s nltk package to calculate the average sentiment of each persons’ free-response (in which they were asked how they felt about cultured meat). Here’s how the scores distributed: mostly middling to positive, with some negative responses.

Perhaps unsurprisingly, people’s sentiment about cultured meat (as expressed in their free response answer) was positively correlated with their willingness to try it:

A more surprising finding (though a definitively exploratory one) was an interaction between condition (i.e., whether cultured meat was described as “grown” or “manufactured”) and disgust sensitivity in predicting willingness to try it. Interactions are sometimes confusing to explain. But what this one means is: there was a stronger relationship between disgust sensitivity and willingness to try cultured meat for people in the “grown” condition than people in the “manufactured” condition. I’m still not sure why—and the finding may turn out to be entirely spurious.

When I added these other factors to the linear regression model, the R^2 improved to .25, which means that altogether, these variables explain about ~25% of the variance in willingness to try cultured meat. That’s a lot leftover!

I also looked at two other measures:

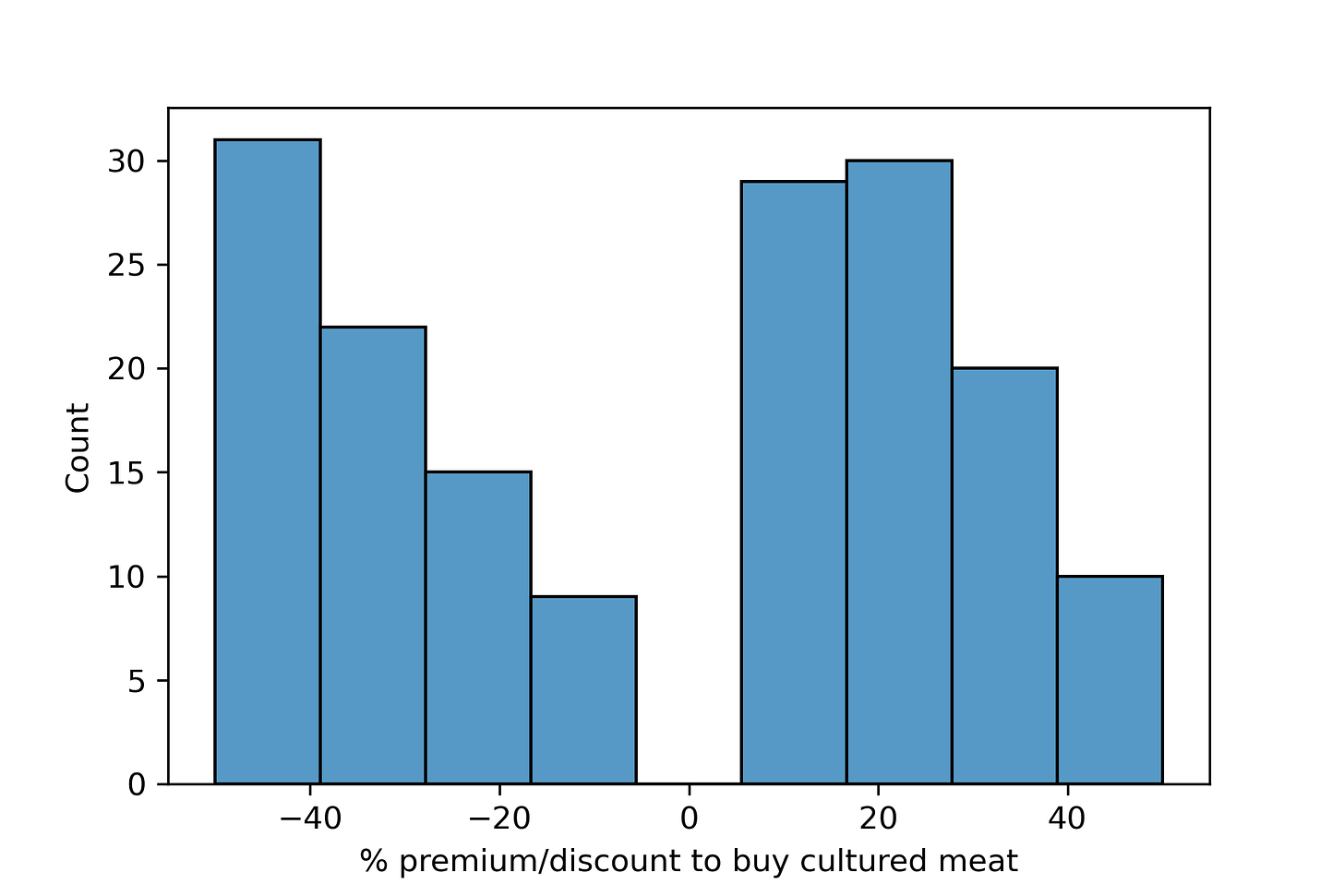

The % premium people would pay (or discount % required) for cultured meat.

Whether people would be willing to pay taxes to subsidize cultured meat research and production.

In the former case, the distribution looked like this:

The caption says it all, but put another way: if this is true (and generalizable), these data—in contrast to the Faunalytics study I’ve described before—suggest that we’re going to need to make cultured meat cheaper than “regular” meat to get uptake.

Interestingly—but also discouragingly—none of the measured factors besides Sentiment predicted one’s willingness to pay a premium (or the discount required).

Finally, when it came to taxes, the two variables that best predicted whether someone would pay additional taxes was sentiment (more positive sentiment was correlated with willingness to pay higher taxes) and political affiliation (liberals were more willing to pay higher taxes6).

What I learned

As I’ve noted throughout, this was a pilot study. That means everything here should be taken with a grain of salt.

That being said, these are some of my biggest takeaways:

Certain previous empirical findings are pretty reliable: namely, disgust sensitivity and gender are both reliably correlated with stated willingness to try cultured meat.

One’s “thoughts and feelings” about cultured meat (as measured in the sentiment scores) are reliably and strongly correlated with one’s willingness to try it, pay taxes to help subsidize its production, and pay a premium to purchase it.

Even accounting for all the factors I measured, a statistical model only explains ~25% of the variance in how willing people say they are to try cultured meat.

There’s possibly an interesting (and as-of-yet unreported) interaction between disgust sensitivity and whether cultured meat is described as grown or manufactured—however, this finding was discovered in an exploratory analysis.

The first takeaway is encouraging: it places past work on even firmer ground, and also gives me confidence that I didn’t mess anything up too terribly in my own survey.

The same goes for the second takeaway: it seems obvious, perhaps, that how one feels about cultured meat will predict these outcome measures, but: a) it’s never been measured before; and b) again, it gives me confidence that these instruments and tools are picking up on something “real”.

The third takeaway is aspirational: it makes me wonder what other factors can help explain someone’s willingness to try cultured meat. Just off the top of my head, I have a few candidates I’m excited to explore:

Empathy, particularly as directed towards non-human animals.

Food neophobia; this has been shown to be very predictive in past work, and I’d like to know just how much variance we can explain once we account for it.

Anxiety or concern about climate change; given that reducing one’s carbon footprint is a big reason for reducing meat consumption, I’m wondering whether people with more climate-related anxiety are also more willing to try cultured meat.

Specific subsets of the disgust scale: disgust sensitivity is a composite measure, but it’s possible some questions are particularly predictive of one’s willingness to try cultured meat. Which ones?

And finally, the (possible) interaction between how cultured meat is described (“grown” vs. “manufactured”) and disgust sensitivity is exciting: I’d like to follow up on this in a much larger, pre-registered study with a more representative sample.

Which brings me to my next steps: my goal is to design a similar survey (making some tweaks here and there), recruit a representative sample using Prolific, and see whether these results hold up.

Note that “occupation” was the only free response question in this part of the survey, and also the only one I haven’t included in the statistical models.

One of the options was “None of the options listed here would convince me to buy cultured meat”.

This is clearly an item where a baseline would’ve been useful, e.g., what percentage of people would pay higher taxes for universal healthcare, better public transit, and so on.

Another quick caveat: technically, willingness to try is an ordinal variable, which means linear regression isn’t entirely appropriate. A more appropriate analysis, particularly for precise estimates, would be ordinal regression. However, linear regression is quite commonly used for ordinal variables, and it’s (in my opinion) mostly fine if your goal is a rough estimate of the direction of the relationship between two variables, and a sense of whether that relationship is statistically significant—which is all we can really hope for here anyway.

Technically, this means linear regression isn’t an ideal analysis framework; you really want to use something like ordinal regression. But I defend my decision by maintaining that this primarily matters if you want really precise parameter estimates; in this case, I’m mostly looking for a “directional” relationship.

Again, we’d want a baseline here: liberals presumably are more comfortable paying higher taxes for lots of things. Is their willingness here larger or smaller than their willingness to subsidize other government programs? Which ones?

You appear to be focused on irrational, emotion-based objections to cultivated meat. There are also health-based reasons, identified here: https://meatthefacts.eu/home/activity/beyond-the-headlines/lab-grown-meat-53-hazards-identified-by-fao-who/